Dogma, in the broad sense, is any belief held unquestioningly and with undefended certainty. It’s a point of view that people are expected to accept because it is put forth as authoritative without adequate grounds. This helps us understand more about the ‘Earned Dogmatism Effect‘ – which tells us that being labeled as an “expert” may contribute to us being close-minded.

In a study with six experiments, Victor Ottati, Erika D. Price, Chase Wilson from Loyola University Chicago and Nathanael Sumaktoyo from University of Notre Dame tested the Earned Dogmatism Hypothesis, and concluded that experts are entitled to adopt a relatively dogmatic, closed-minded orientation. As a consequence, situations that engender self-perceptions of high expertise elicit a more closed-minded cognitive style.

Inflated Scores

In one of the tests, participants were randomly assigned to the easy (successful) or difficult (failure) political test. Fifteen multiple choice questions were asked, with questions in the easy condition being, “Who is the current President of the United States?“, to equivalent question in the difficult condition being “Who was Nixon’s initial Vice-President?“.

Upon completing the test, participants were provided with false and inflated scores. Participants in the easy (successful) condition were told that they performed better than 86% of the other test takers; whereas participants in the difficult (failure) condition were told they performed worse than 86% of the test takers.

The participants in the difficult (failure) condition expressed greater political open mindedness than those in the easy (successful) condition. This went on the prove that even the higher self-perceived expertise created an effect of cognition blockade into themselves. Those people who had the impression that they were relatively expert on a certain topic (even when they were given inflated scores), led them to be less willing to consider others’ viewpoints – as stated by this earned dogmatism effect.

President Obama’s Policies

In another test conducted by Ottari and team, participants were asked to enlist either two (easy case) or ten (difficult case) policies implemented by the then US President, Barack Obama. Participants were randomly assigned to the easy or difficult case. In the easy case, participants were allowed to advance to next screen as long as they described one policy. In the difficult case, the participants were asked to write ten policies signed by Obama, or if they couldn’t name ten, they were instructed to write “I don’t know” in the remaining text boxes.

The result? All participants in the easy condition named at least one policy and more than half of the participants named two policies. In the difficult condition, participants named an average of four policies. As predicted by the Earned Dogmatism Effect, participants in the difficult condition reported greater openness to political open mindedness, while participants in the easy condition had less openness to other political opinions.

The Conundrum of Confidence & Competence

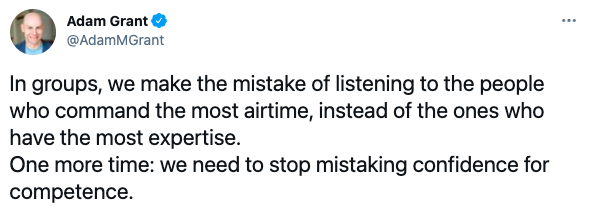

The top rated professor at Wharton for seven straight years, Adam Grant, says, “We need to stop mistaking confidence for competence.“

The problem is that we equate confidence with competence. But they’re very different things. Unjustified confidence is a form of incompetence, and likewise, competence doesn’t really justify the confidence.

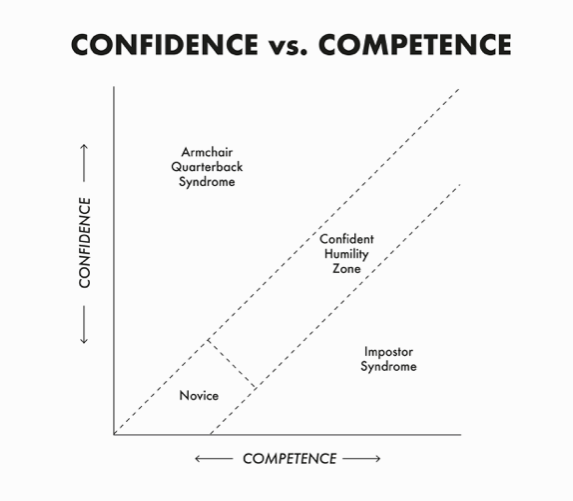

In Grant’s recently published book, “Think Again“, he describes two major syndromes – armchair quarterback syndrome and imposter syndrome – with the difference of these two things – competence and confidence.

When confidence is greater than competence, we fall victim of armchair quarterback syndrome, when we become blind to our own weakness. The opposite of armchair quarterback syndrome, imposter syndrome, is where competence exceeds confidence.

So where do we begin then?

In between the two syndromes, we have the sweet spot of confident humility zone. The right balance between competence as well as confidence brings out the best within us, allowing us to dodge the tricky earned dogmatism effect.

No one likes an arrogant expert. Being definite, confident, and certain are all good things for conveying competence, but being dogmatic, narrow, and inflexible can limit the credibility and usefulness of the expert.

To start with, we need to think deliberately how we can be wrong. Of course it is hard for our biased brain to scan our wrongness ourselves. To avoid such biases, we can reframe the question as, “How can others be right?“. Asking the question would not really prevent us from escaping our wrongness, but helps to understand a different perspective where two rights can exist.

Countless studies have shown that most of us overestimate our understanding of various topics, everything from how a vacuum cleaner works to the detail of political policies – a phenomenon explained by ‘the illusion of explanatory depth’. It is essential for us to understand and establish a realistic sense of our own knowledge. A simple way to address this intellectual overconfidence is to make the effort to explain a relevant issue or topic to yourself or someone else in detail, either out loud or in writing. This exercise makes us aware about the gaps in our knowledge, making it more apparent, thereby breaking this illusion of expertise.

To understand another way to combat our illusion of expertise, we need to explore one of the mental models given by Josh Waitzkin, a chess prodigy and an author of the book, “The Art of Learning“. Waitzkin tells:

It’s so easy to think that we were in the dark yesterday but we’re in the light today… but we’re in the dark today too.

Josh Waitzkin, author, “The Art of Learning“

The same way we look back at five years younger ourselves and laugh about how stupid we were, we will definitely look back at today five years from now and laugh again. We commonly go on to say, “I didn’t know before, but I know today”. This only tells us that we don’t know something today as well, which we will know tomorrow. This realization will definitely help us to break the illusion, and come above the earned dogmatism effect.

In conclusion, the next time your back of the head tells you during a conversation, “I know, thanks”, tell the biased brain, “You don’t know everything, so let me listen.”

—

Also read: Where even experts can go wrong?